🖼️ Example gallery#

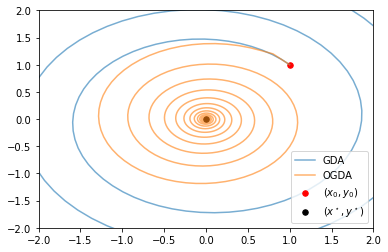

Optimistic Gradient Descent in a Bilinear Min-Max Problem

Optimistic Gradient Descent in a Bilinear Min-Max Problem.

Contrib Examples#

Examples that make use of the 🔧 Contrib module.

Differentially private convolutional neural network on MNIST.

Differentially private convolutional neural network on MNIST.

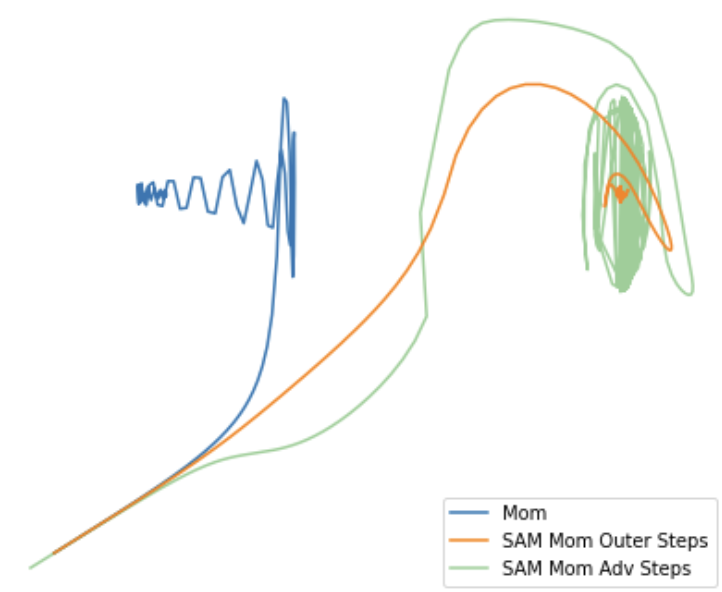

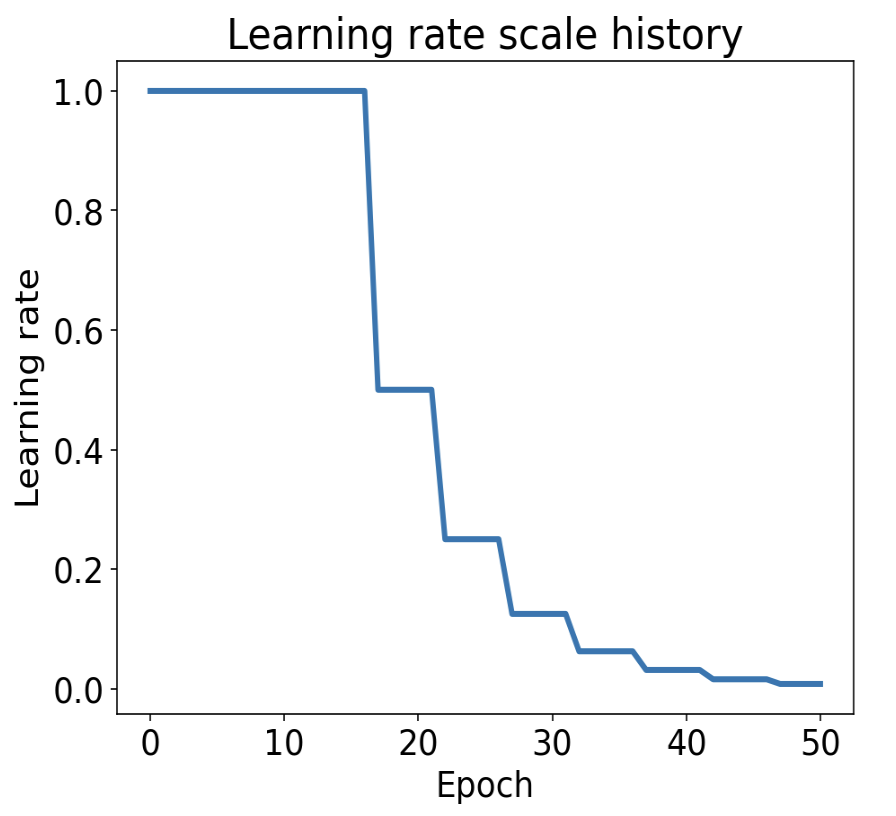

Reduce on Plateau Learning Rate Scheduler

Example usage of reduce_on_plateau learing rate scheduler.